Google Launches Gemini 3.1 Pro, Doubling Reasoning Performance on Advanced Benchmarks

Google has announced the release of Gemini 3.1 Pro, an upgraded core model designed for complex reasoning and advanced problem-solving tasks. The model is being rolled out across consumer apps, developer tools, and enterprise platforms.

According to Google, Gemini 3.1 Pro achieved a verified score of 77.1% on ARC-AGI-2, more than doubling the reasoning performance of Gemini 3 Pro on that benchmark.

Part I — What Happened (Verified Information)

Model Release

Google confirmed that Gemini 3.1 Pro is now rolling out in preview across:

For Developers:

Gemini API in Google AI Studio

Gemini CLI

Google Antigravity (agentic development platform)

Android Studio

For Enterprises:

Vertex AI

Gemini Enterprise

For Consumers:

Gemini app (higher limits for AI Pro and Ultra plans)

NotebookLM (exclusive to Pro and Ultra users)

The release follows a recent update to Gemini 3 Deep Think, which Google positioned as targeting scientific, research, and engineering challenges.

Benchmark Performance

On ARC-AGI-2 — a benchmark designed to evaluate a model’s ability to solve novel logic patterns — Gemini 3.1 Pro achieved:

77.1% verified score

More than double the performance of Gemini 3 Pro on the same benchmark

Google frames this as evidence of improved “core reasoning.”

Functional Capabilities

Google highlighted practical applications, including:

Advanced reasoning workflows

Complex data synthesis

Visual explanations of technical topics

Generation of animated, website-ready SVG graphics directly from text prompts

The model is currently available in preview as Google collects feedback before broader general availability.

Part II — Why It Matters (Strategic & Technical Analysis)

- The Shift Toward Core Reasoning Metrics

ARC-AGI-2 evaluates a model’s ability to solve unfamiliar logical tasks rather than rely on memorized patterns.

Improved scores suggest:

Enhanced generalization capability

Stronger pattern abstraction

More reliable multi-step reasoning

However, benchmark gains do not always translate directly into real-world reliability. Performance consistency across diverse enterprise environments will determine impact.

- Agentic Workflow Expansion

The release references “agentic development” and Google Antigravity — signaling a strategic focus on AI systems capable of:

Multi-step task execution

Iterative refinement

Semi-autonomous development processes

This positions Gemini not just as a chatbot, but as an embedded intelligence layer within developer ecosystems.

- Platform Consolidation Strategy

By integrating Gemini 3.1 Pro across:

Consumer apps

Enterprise AI platforms

Developer tooling

Google reinforces its vertical integration approach.

This strategy strengthens:

Subscription tier differentiation (AI Pro and Ultra plans)

Enterprise lock-in via Vertex AI

Developer dependency on Gemini APIs

- Competitive Landscape

The generative AI race increasingly centers on:

Benchmark reasoning performance

Agent orchestration capability

Multimodal integration

Gemini 3.1 Pro’s improvements reflect intensifying competition with other frontier AI providers, particularly in enterprise reasoning and development workflows.

Part III — Risk & Outlook

Opportunities

More reliable AI-assisted engineering

Advanced enterprise automation

Improved data synthesis for research and analytics

Higher-value subscription tiers

Risks

Over-reliance on benchmark performance as marketing signal

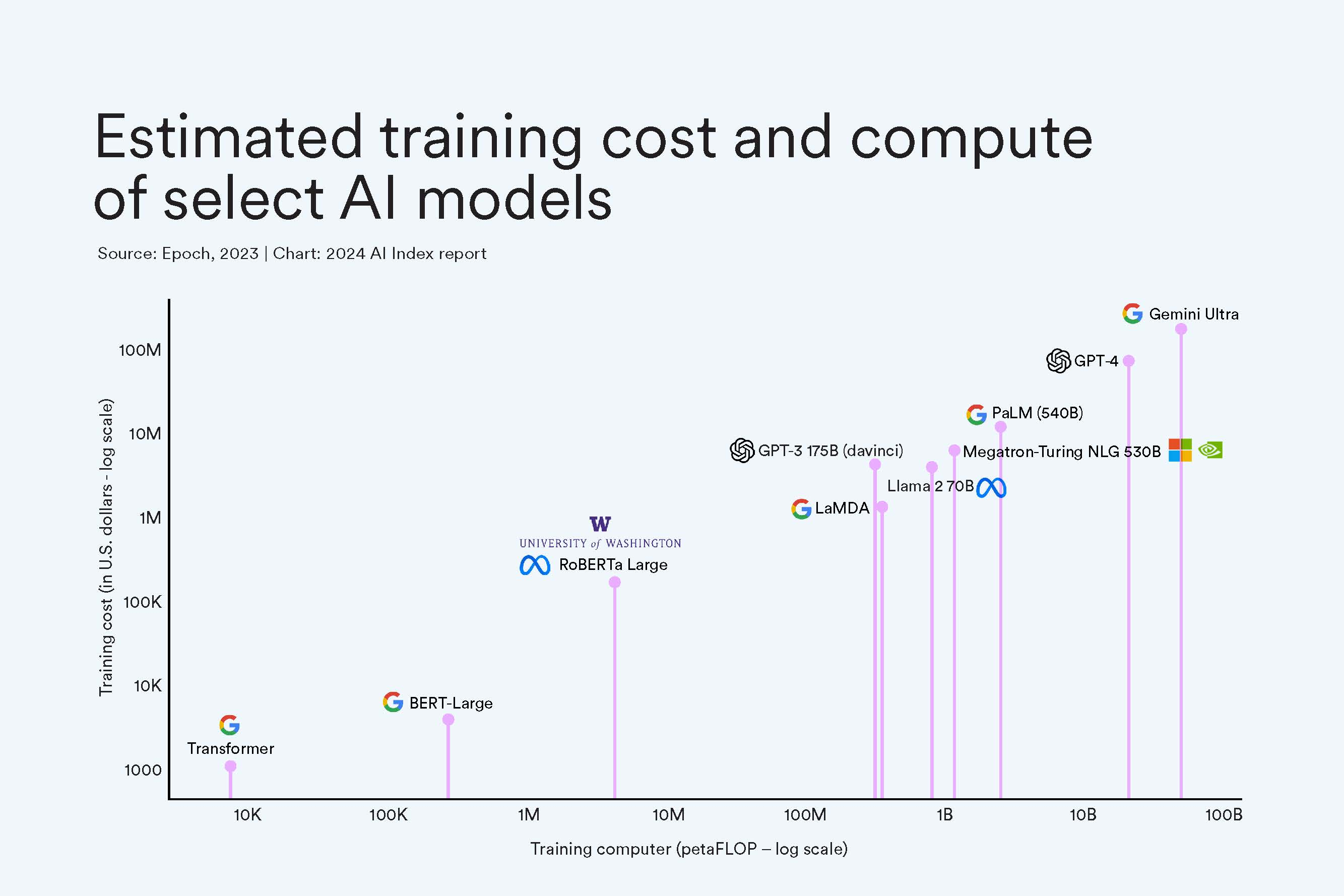

Escalating infrastructure costs

Governance challenges in agentic task execution

Competitive pressure triggering rapid, potentially unstable releases

Conclusion

Gemini 3.1 Pro represents a meaningful step forward in Google’s effort to position its AI systems as advanced reasoning engines rather than simple conversational tools.

While benchmark gains are promising, long-term impact will depend on real-world deployment stability, enterprise trust, and the model’s ability to manage increasingly autonomous workflows.

The evolution from “chat assistant” to “intelligent execution layer” appears well underway.